AI Annotator

Overview

In an environment where consumers' needs are constantly changing, our AI automation is built to supercharge consumer engagement with Curiously Human™ interactions that have the perfect balance of human agents, intelligent automations, and conversational AI. AI Annotator provides you with a straightforward platform to do just that, by empowering agents, bot tuners, and QA teams with the opportunity to provide valuable feedback using an easy-to-use, efficient interface.

AI Annotator lets you easily leverage consumer messages into training phrases, greatly increasing intent matching and overall bot performance. Feedback can be easily reviewed and used to correct your AI automation. This process of labeling data that is later used to improve an AI model is called "AI annotation."

The "annotating agent"

By the nature of their work, agents are exposed to hundreds and sometimes thousands of conversations. As a result, these agents become “distinguished experts” in identifying consumers' needs and wants based on text. When agents receive a conversation, they quickly understand the consumers’ intent, and if the intent is not clear, they have the option of asking the consumer to clarify it. Therefore, agents are in an ideal position to identify AI automation issues and suggest a correct solution. Agents can use their expertise to suggest an intent for messages in which bots did not identify the intent. In many cases, permission to annotate is granted to the more experienced agents.

LivePerson's AI annotation management empowers agents to:

- Seamlessly view annotations and transcripts to understand the context of a message

- Edit and classify domains and intents of the incoming annotations

- Review existing training phrases in a chosen intent and compare with the new annotation

- Accept the annotation to be added to the intent, or archive it for future reference - closing the annotation loop all in one place

Limitations

AI Annotator doesn't work on third-party bots.

Get started

AI Annotator requires backend enablement. Contact your LivePerson representative for more info.

Understand intent annotations

Sometimes, a bot doesn't recognize the intent behind a consumer’s message. For example, the consumer might say, “I would like to activate my service,” but the bot doesn't recognize the intent. This happens when the training phrases that comprise your intents aren't yielding a high enough confidence level to trigger an intent identification.

When an intent isn't recognized, a fallback flow is triggered, and the consumer might be asked to rephrase the message.

Finally, if the bot doesn't recognize the intent in the rephrased message, the conversation might be escalated to an agent for handling.

Your goal then is to quickly find the consumer intent that hasn't been identified and add it to the bot training model as a training phrase. By doing so, the bot can identify the intents behind similar consumer messages in the future and prevent conversations from being transferred to human agents over and over again.

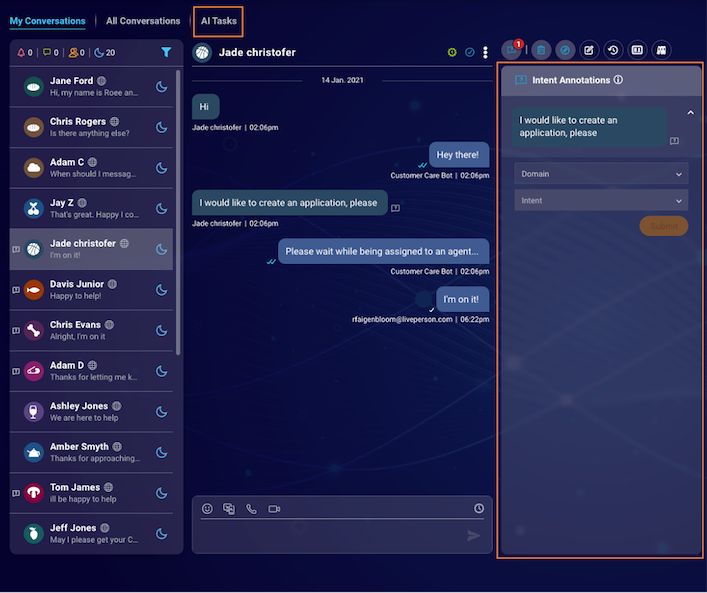

AI Annotator helps you achieve this by providing users (e.g., agents and bot tuners) who view conversations with the ability to annotate the correct intent. Once the annotation is submitted, the consumer phrase along with the suggested domain and intent is presented in the AI Tasks tab. A user with permission to view the tab and edit the intent model can add the consumer message to the correct intent.

Set up intent annotations

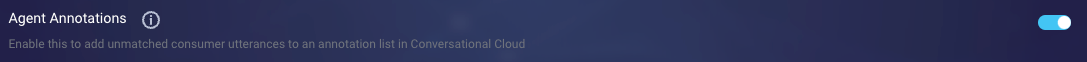

Step 1 - Initiate bots to create intent annotations

1 - In LivePerson Conversation Builder, open the bot.

2 - In the menu in the upper-left, click More items.

3 - Select Bot Settings from the menu.

4 - On the settings page, scroll down and click More Settings to show more settings.

5 - Turn on the Agent Annotations setting, and click Save.

6 - Navigate to the bot's Agent Connectors page. Stop the connector, then start it again.

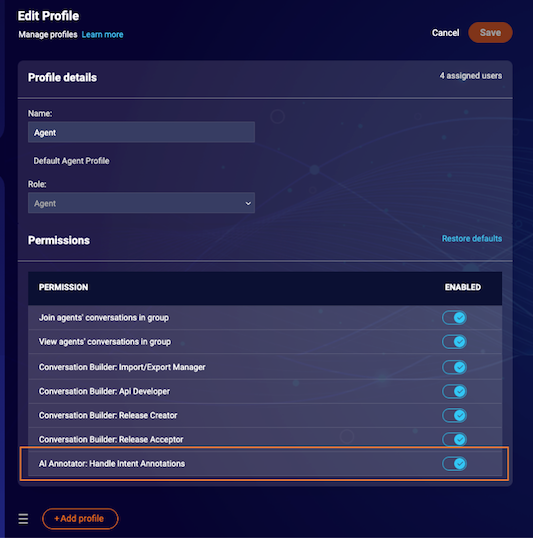

Step 2 - Provide users with permissions to open intent annotations

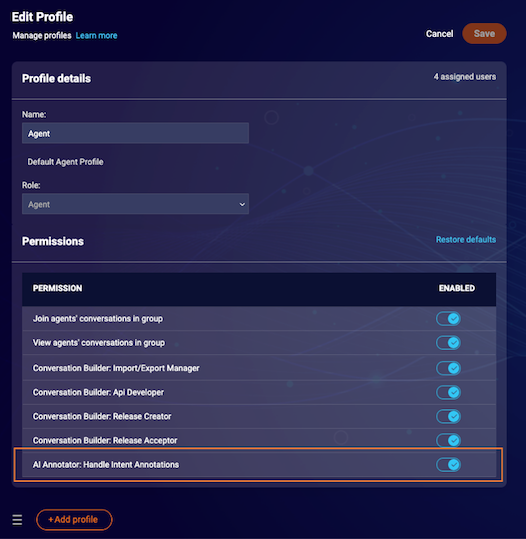

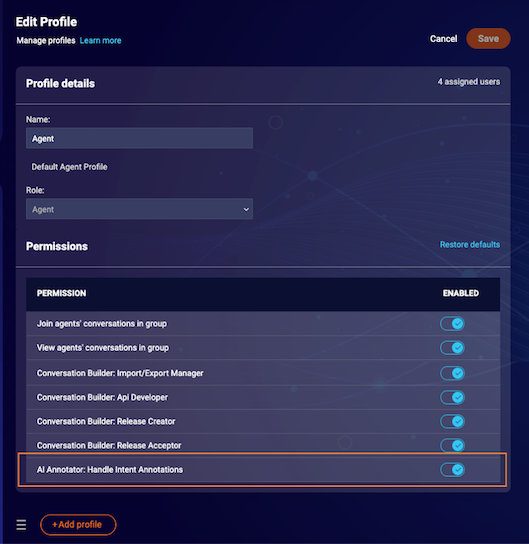

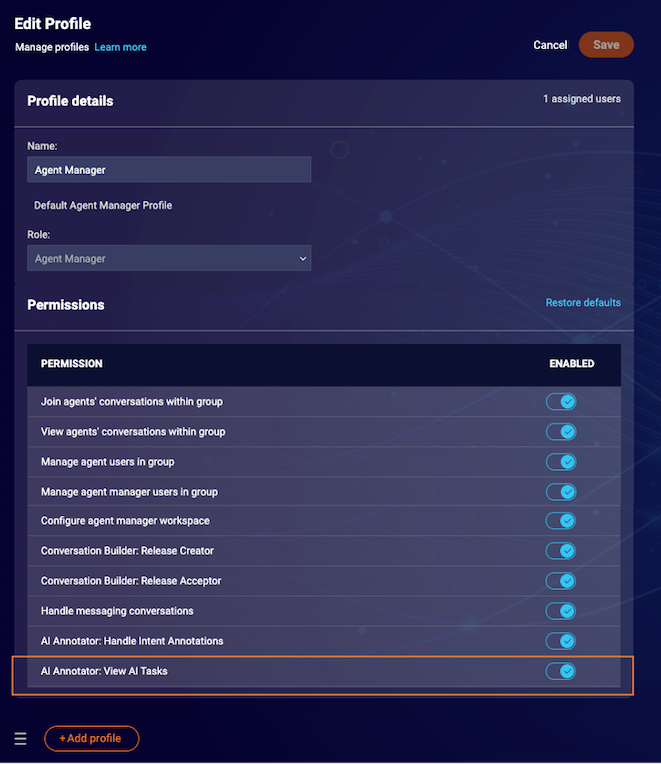

For the bot users that are expected to open annotations, open the profile, turn on the permission “AI Annotator: Handle Intent Annotations,” and click Save. This permission is available for the Agent and Agent Manager roles. It's Off by default.

Step 3 - Provide users with permissions to submit intent annotations

To enable human users (e.g., agents and bot tuners) to view the Intent Annotations widget and submit annotations, open the profile, turn on the permission “AI Annotator: Handle Intent Annotations,” and click Save. This permission is available for the Agent and Agent Manager roles. It's Off by default.

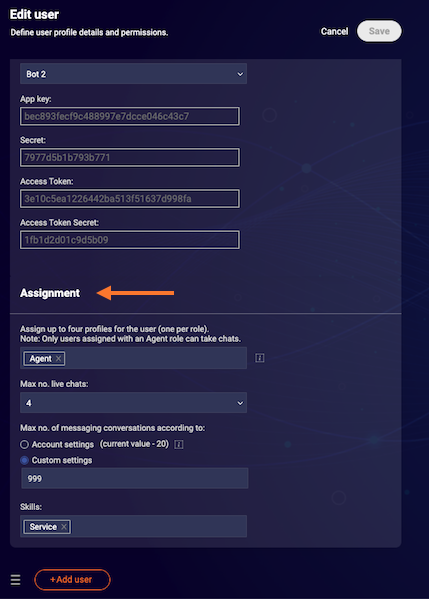

Next click the Manage Users and Skills tab, and make sure that the role is added to the annotating users. When editing a specific user profile, you can update user roles in the Assignment section.

Step 4 - Provide users with permissions to view submitted intent annotations

To enable users to view, copy, and export the submitted annotations in the AI Tasks tab, open the profile, turn on the permission “AI Annotator: View AI Tasks,” and click Save. This permission is available for the Agent Manager and Admin roles. It's Off by default.

Expected results

With the configurations above done properly, in the Agent Workspace, a user with an “AI Annotator: Handle Intent Annotations” permission can see and submit annotations created by bots that were set to open annotations. In addition, users with the permission “AI Annotator: View AI Tasks” can review, copy, and export submitted annotations.

Use intent annotations

Identify conversations with open intent annotations

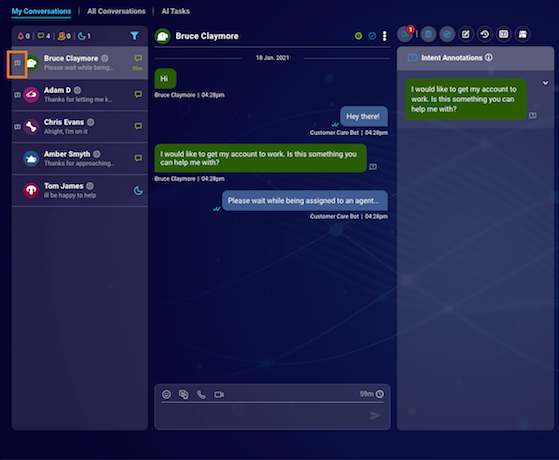

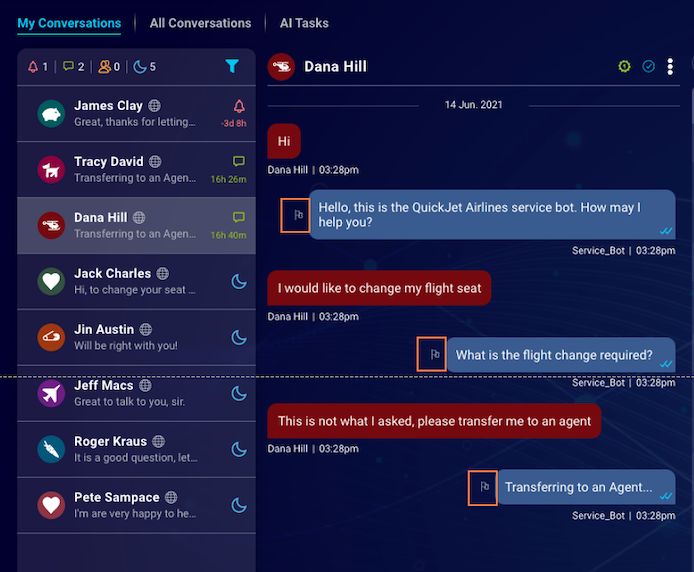

In the Agent Workspace, conversations with open Intent Annotations show an icon in the shape of a question mark, appearing to the left of the conversation preview avatar. Conversations with no intent annotations, or conversations in which all intent annotations have been submitted, don't show the icon.

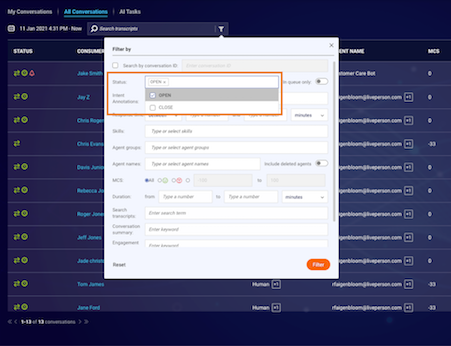

You can find all conversations with intent annotations using the filter in the All Conversations area.

Identify messages with annotations

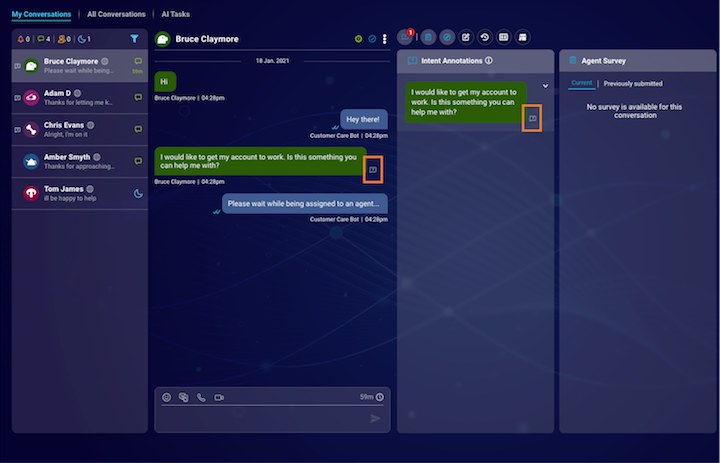

In the Agent Workspace, a message that has an open intent annotation shows the question mark icon within the transcript area. If the intent annotation has not been submitted, the color of the icon is white. If the intent annotation has been submitted, the color of the icon is green.

A second method of identifying messages with intent annotations is by using the Intent Annotations widget, which is available for all users with the permission to submit intent annotations. The widget is populated with the annotations from the conversation.

Similar to its appearance in the transcript area, the Intent Annotations icon is white if the intent annotation hasn't been submitted, and it's green if the intent annotation has been submitted. If you click the Intent Annotation icon, the relevant message is highlighted in the transcript area. If the message is not shown in the transcript area (i.e., above or below the fold), it automatically scrolls to become visible.

Submit an annotation

1 - Click the Intent Annotations widget icon.

2 - Within the widget, click the message to reveal the Domain and Intent dropdowns. Respectively, the dropdowns are populated with the domains and intents that were defined by your brand.

3 - From each dropdown, select the domain and the intent, and then click Submit.

Non-intentful annotation filtering

Many messages that bots could not identify and become open annotations are actually not intentful. For example, there are users who “troll” our bots or simply provide messages that are not intentful (for example “Hi” or “Thank you”). These messages are many times a very big part of the overall unidentified messages turning into annotations.

By using a unique classifier that will determine annotation whether it's intentful or not, we will screen all non-intentful annotations and reduce the time and effort spent on annotations by agents, focusing on intentful annotations only.

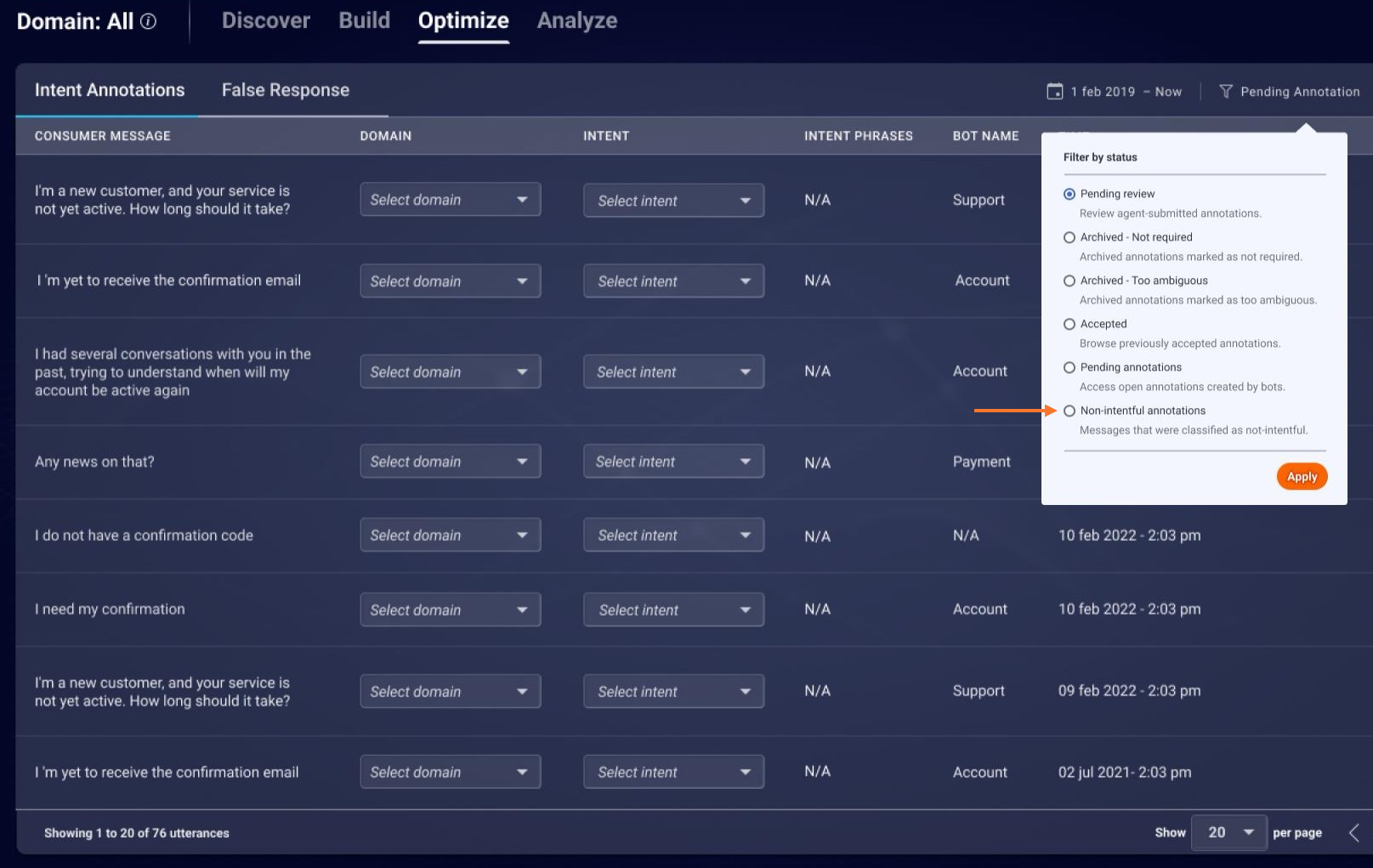

In Intent Manager, non-intentful annotations are available for review in:

- Annotation Management (the Optimize tab) under the "Non-intentful annotations" filter

- The "All Conversations" filters

Review an intent annotation

In the Agent Workspace, all submitted intent annotations, including the consumer message and the suggested domain and intent, are available for review in the AI Tasks tab.

You can filter for the intent annotations according to the time in which they were opened, navigate to the conversation to which it belongs, and copy an individual message to the clipboard. You can also export the list of intent annotations to a CSV file.

Add intent annotations to an intent

For information on this, see here in the Developer Center.

False Response annotations

What's a false response?

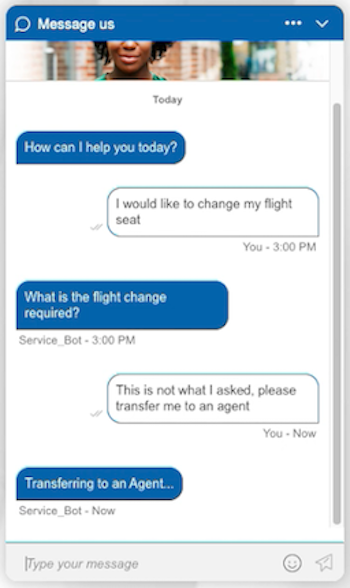

One common use case occurs when a bot provides an incorrect response to a consumer’s message. For example, if a consumer says “I would like to change my flight seat”, the bot might identify a “change flight” intent instead of a “change seat” intent. This happens when the training phrases that comprise your intents are not yielding an identification of the incorrect response. It also might be that the intent recognition is done correctly, but that the information included in the response is outdated or inaccurate.

Unlike the case where a bot doesn't recognize the user’s intent, False Response cases are often difficult to discern, since inherently the bot can't report an issue it is not aware of. In cases where the bot isn't successful in recovering from this situation, the conversation might be escalated to an agent for handling.

Your goal here is to quickly spot this issue and correct it, for example, by tuning the intent or tweaking the information included in the bot’s response.

AI Annotator False Response achieves this by providing users who view conversations with the ability to flag incorrect bot messages. Once a message is flagged, it appears in the AI Tasks tab. A user with permission to view the tab can then correct the issue.

Set up AI Annotator False Response

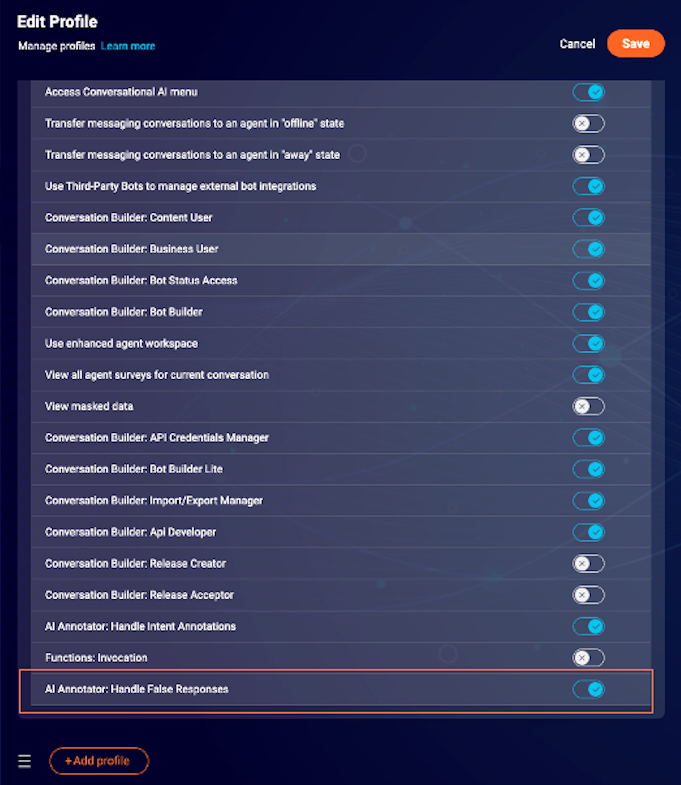

Step 1 - Provide users with permission to submit annotations

To enable human users (e.g., agents or bot tuners) to view the False Response flag and be able to flag messages, open the profile, turn on the permission “AI Annotator: Handle False Responses,” and click Save. This permission is available for the Agent and Agent Manager roles. It's set to Off by default.

Step 2 - Provide users with permission to view submitted annotations

The AI Tasks tab is an additional tab in the Agent Workspace that enables users to view, copy, and export the submitted annotations in this tab. To show this to users, open the profile, turn on the permission "AI Annotator: View AI Tasks," and click Save. This permission is available for the Agent Manager and Admin roles. It's set to Off by default.

Intent Analyzer is an Intent Management tool in the Conversational Cloud. Brands that use this tool may leverage it to display submitted annotations.

To view submitted annotations in Intent Analyzer

1 - Follow the steps required to view submitted annotations in the AI Tasks tab.

2 - Make sure that Intent Analyzer is configured for your account and that you have permission to view it.

Expected results

With the configurations above done properly, in the Agent Workspace, a user with an "AI Annotator: Handle False Responses" permission can flag any bot response as incorrect in conversations opened after the flag has been turned on.

In addition, users with the permission “AI Annotator: View AI Tasks” can review, copy, and export flagged messages. The users who performed the relevant configuration can view submitted annotations via Intent Analyzer.

Missing Something?

Check out our Developer Center for more in-depth documentation. Please share your documentation feedback with us using the feedback button. We'd be happy to hear from you.