Trustworthy Generative AI for the enterprise

Introduction

Generative AI is transforming the future of business across all industries. It has the ability to create new and innovative solutions to complex problems in a way that was previously impossible with traditional computing methods. Generative AI unlocks new possibilities for innovation, efficiency, and customer engagement.

In this article, we break it all down for you. And we explain how you can safely and productively take advantage of the unparalleled capabilities of Generative AI within our trusted Conversational AI platform to reap better business outcomes.

Watch the video

Technical terms and buzzwords

Natural Language Processing (NLP)

Natural Language Processing (NLP) is a branch of Artificial Intelligence (AI) that deals with enabling computers to understand, interpret, and manipulate human language (natural language) as it is spoken or written. NLP involves developing algorithms and models that can perform tasks such as language translation, sentiment analysis, speech recognition, and text summarization. These algorithms work by analyzing the structure and meaning of text or speech, including the context, syntax, grammar, and semantics, to derive insights and generate output. NLP has numerous applications in areas such as chatbots, language translation, voice assistants, and text analytics.

Generative AI

Generative AI uses machine learning models to produce unique content from patterns in data. This groundbreaking technology is built on massive datasets and relies on a mix of supervised and unsupervised learning methods, enabling generative models to produce human-like text, videos, audio files, images, and even code.

Large language models (LLM)

Large language models (LLM) are a type of generative AI focused on understanding and generating human-like text. Powered by vast amounts of text training data, these models allow CX leaders to shift away from manual efforts and use artificial intelligence to automate content creation, improve decision-making, and deliver human-like experiences.

Why out-of-the-box solutions don't work

While the developments in Generative AI and LLM models are exciting, they are powerful entities that require extensive fine tuning and customization to align with enterprise-specific needs. There are issues with bias and inaccuracy. There’s a lack of analytics. And more.

Given that most LLMs are trained on general-purpose data and lack domain-specific knowledge, the usability of out-of-the-box LLM-powered solutions is not realistic, as they need significant adaptations, controls, safety rails, and deep investments of time and resources.

Partner with us for Trustworthy AI

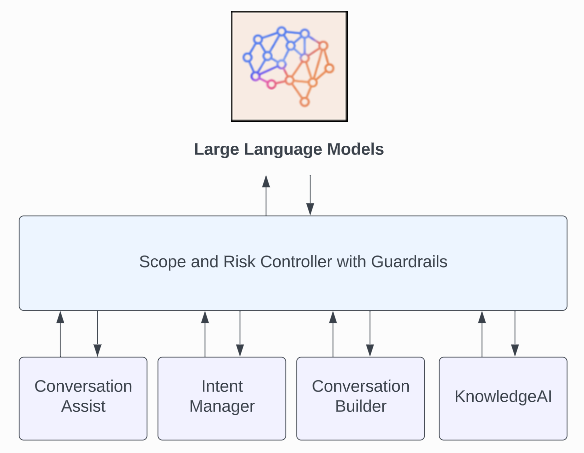

LivePerson makes the power of LLMs accessible to your enterprise in four key ways:

- Enterprise-grade data: Augment LLMs with the world’s largest conversational data set, drawn from a billion monthly interactions.

- Human optimization at scale: Keep conversations grounded, factual, and relevant to your industry with over 350,000 skilled humans in the loop, enhancing models continuously.

- Impactful insights made easy: Accelerate better decision making with enterprise-level analytics and reporting that automatically delivers actionable insights.

- Responsible AI from Day One: Reduce risk of bias by partnering with the founders of EqualAI, spearheading standards and certification for responsible, safe, and secure AI since 2018.

Our solution

You can deliver an omnichannel customer experience that’s powered by state-of-the-art LLMs. Our solution helps you to reduce costs, improve resolution times, and boost productivity.

AI safety tools

Guardrails and safety measures are put in place to provide safe and secure bot and agent responses.

Build customer trust, stay compliant, and ensure accurate representation of your brand with the following:

- Enterprise-grade safety controls that validate and properly navigate inbound/outbound conversations across channels leveraging LLMs.

- Hallucination detection for URLs, phone numbers, and email addresses.

- Prompting and guidance to focus conversations on defined scope of knowledge, tested among hundreds of chatbots for safety.

- Agents in the loop with real-time response validation, allowing agents to review, edit, and approve responses.

PCI and PII masking

When you use LivePerson’s Generative AI solution, PCI data (Payment Card Industry data) is always masked before being sent to the LLM service.

PII (Personally Identifiable Information) can also be masked upon your request. Be aware that doing so can cause some increased latency. It can also inhibit an optimal consumer experience because the omitted context might result in less relevant, unpredictable, or junk responses from the LLM service. To learn more about turning on PII masking, contact your LivePerson representative.

Responsible AI usage

LivePerson’s Generative AI solutions may leverage Azure OpenAI APIs. As a result, when you use our Generative AI features and tools, we ask that you please comply with the code of conduct for the Azure OpenAI Service to ensure you’re using them safely and responsibly.

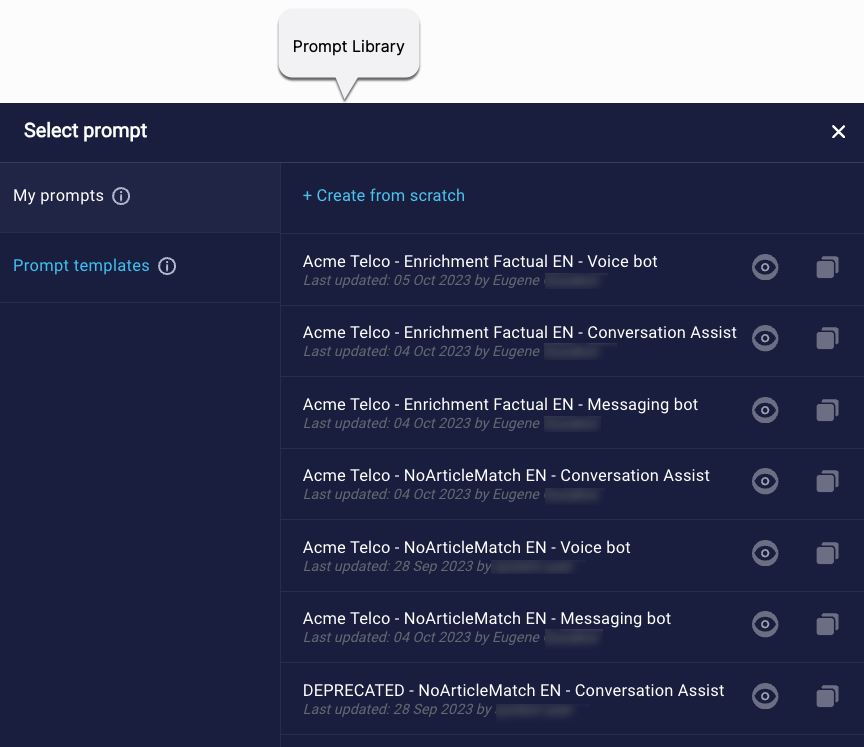

Prompt Library

Use LivePerson's Prompt Library to create and manage the prompts used in your Generative AI solution. Key benefits include:

- Customization that aligns with your brand’s identity: Tailor prompts to reflect your brand’s unique voice and tone.

- Efficiency and cost savings: Quickly deploy Generative AI conversational assistance and LLM-powered bots using pre-built prompts, validated by LivePerson’s data science experts, slashing time to value and operational costs.

- An enhanced consumer experience: Use well-crafted, customized prompts for smoother and personalized responses, driving customer satisfaction and greater containment.

- Self-service changes: You’ve got control. Make prompt changes whenever you require.

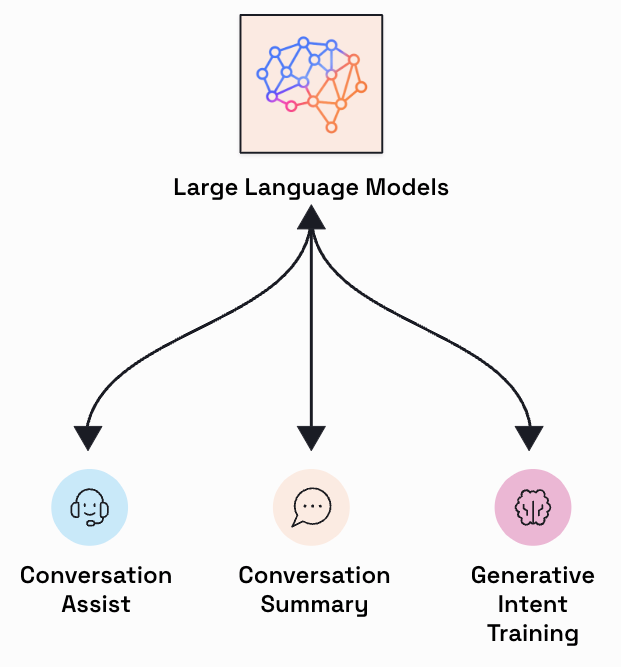

Conversation Copilot

Any person in your company can be the human in the loop and provide their expert opinion.

Reduce agent onboarding and training times, deliver consistent customer experiences, and realize faster time to value with the following:

- Conversation Assist: Offer your agents LLM-powered conversation recommendations for rapid resolution.

- Automated Conversation Summary: Ensure your agents have the context they need, preventing customer repetition across Voice and Messaging channels.

- Generative Intent Training: Accelerate development of your NLU taxonomy by your AI builders.

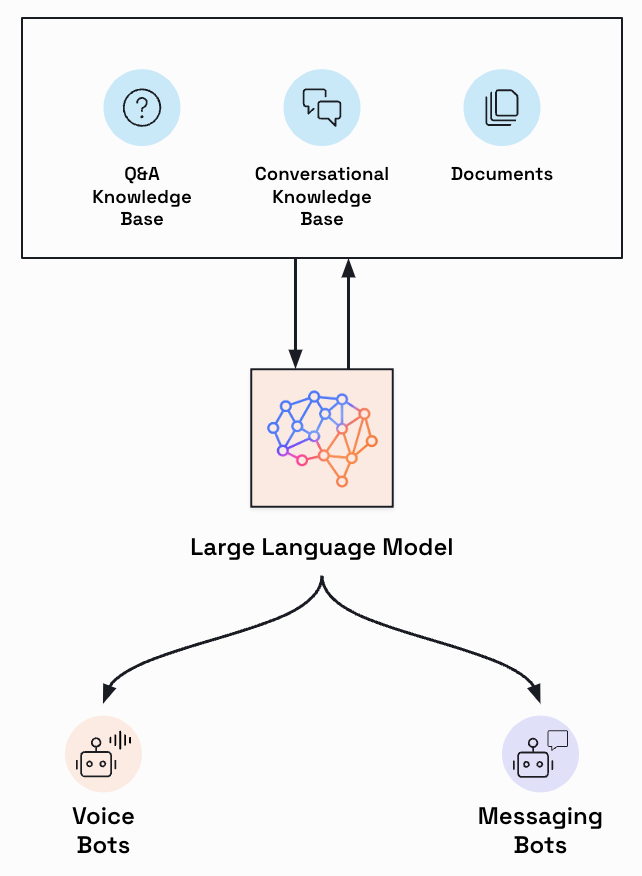

Conversation Autopilot

Virtual assistants can leverage your content to create ChatGPT-like conversational experiences.

Reduce development time, realize rapid deployment, lower operational costs, and increase efficiency and CSAT with the following:

- LivePerson Conversation Builder: Build automated virtual assistants that provide context-aware, secure, and up-to-date conversations across Voice and Messaging channels. Elevate the experiences of your consumers with LLM-powered Routing AI agents and KnowledgeAI agents.

- KnowledgeAI™: Integrate your knowledge content (Web site, PDF, etc.) to fuel powerful conversations.

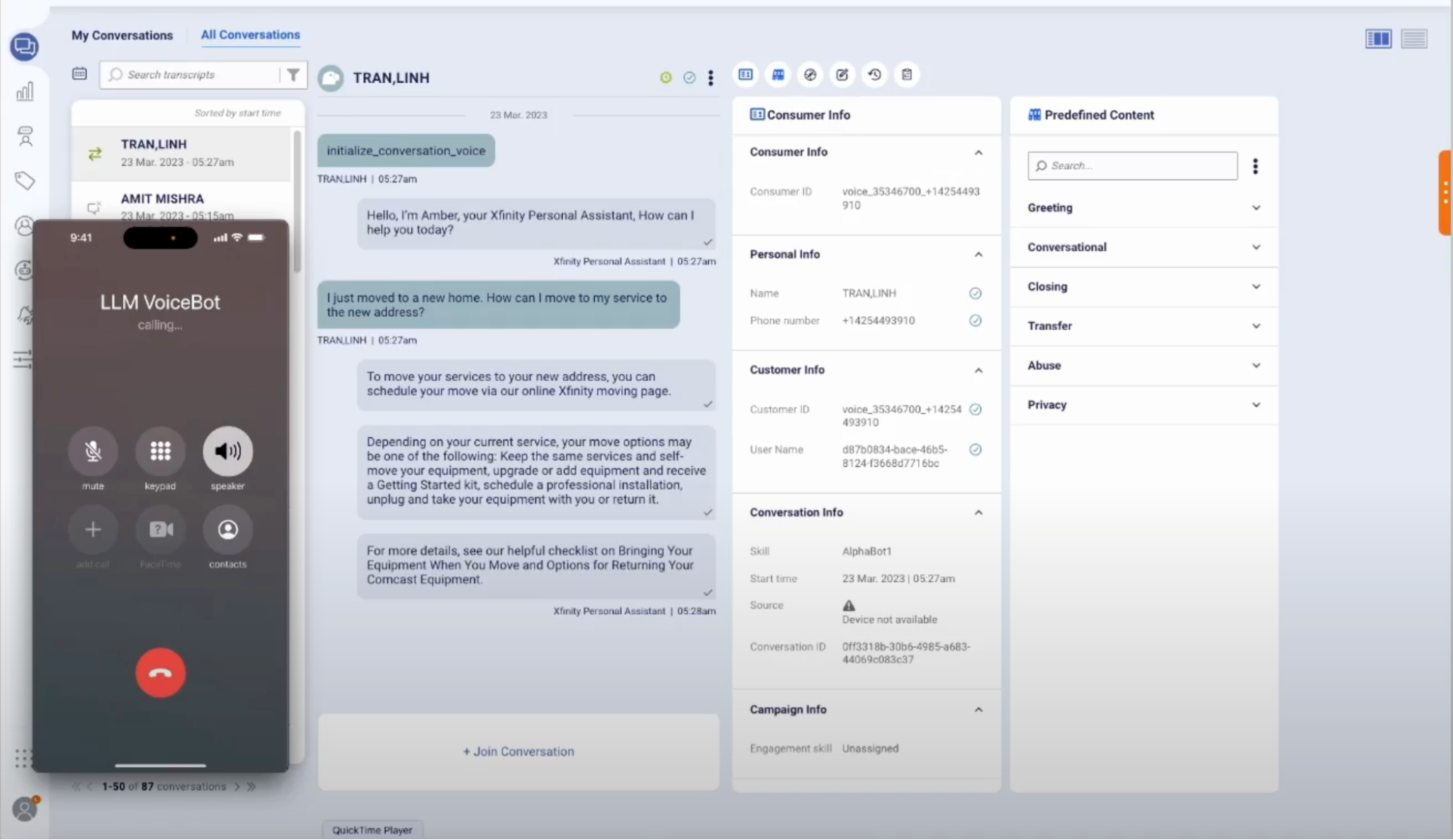

Voice and Messaging AI

You can extend LLM-powered and conversational automation experiences to both Voice and Messaging channels.

Reduce costs, improve omni-channel customer experiences, and boost agent retention in the contact center with LivePerson Conversation Builder. Build LLM-powered Voice bots and Messaging bots that offer warm, natural, and efficient experiences. Experiences that are, well, human-like. You can integrate with third-party platforms (CRM, customer data platforms, etc.) and other business systems to provide rich data and allow conversations to power any business action.

Generative AI reporting

Within our Conversational Cloud, Report Center's analytics and insights help you to build, measure, and improve your customer experience with Generative AI.

Improve operational efficiency, mitigate risks, and realize an excellent customer experience with the following:

- Generative AI reporting dashboard: Use this within the Conversational Cloud to optimize LLM capabilities.

- Safety and usability metrics: These are powered by state-of-the-art content moderation.

- LLM conversational performance: Measure CSAT, MCS, and more.

Partial view

Get started

Missing Something?

Check out our Developer Center for more in-depth documentation. Please share your documentation feedback with us using the feedback button. We'd be happy to hear from you.